Dentistry Has an Inconsistency Problem

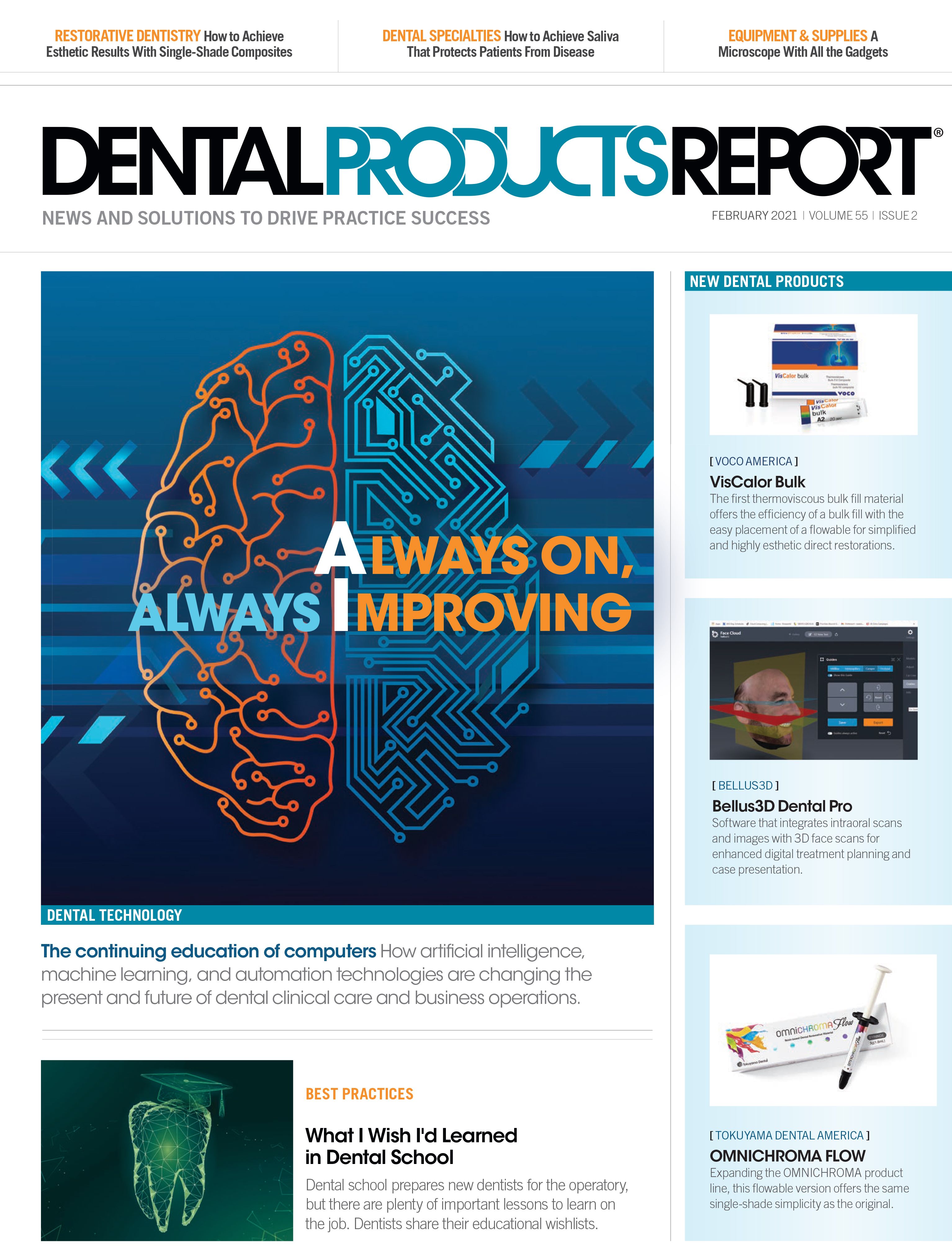

Artificial intelligence is highlighting the fallibility and inconsistency of human clinician's diagnostic evaluation of radiograph images.

Sergey Nivens / stock.adobe.com

A 19th-century Italian astronomer named Giovanni Schiaparelli peered at the Red Planet through his telescope and thought he saw lines crisscrossing its surface. He reported these as canals, and pretty soon other astronomers were seeing them too.

A Martian civilization engaged in public works projects was simply a figment of their imagination. But there’s a lesson in those Martian canals for dentists searching for patterns in often fuzzy-looking radiographs. We are just as likely to imagine something that isn’t there as to overlook something that is.

There It Is, Even When You’re Not Looking for It

I recently co-authored a study of diagnostic agreement among 3 dentists and an Artificial Intelligence (AI)-based detection system, asking them to identify evidence of carious lesions in 8767 bitewing and periapical x-rays. It was a seemingly elementary diagnostic task. The surprising result was not that AI could outperform its human counterparts, we expected that, but that there was so little consistency among the humans.

The dentists disagreed among themselves in fully a quarter of all cases. The likelihood that 1 would identify decay in a given image (21%) was almost 5 times greater than the likelihood that all 3 would (4.2%). One found decay in 5.3% of all cases, another in 8.6%, and the third in 15.9%. The most “sensitive”—or imaginative—dentist was 3 times as likely as the least sensitive one to perceive decay.

Subsequently, we helped facilitate a study for the Dental AI Council (DAIC), asking nearly 140 dentists to review a single full-mouth series and provide precise diagnostic evaluations and treatment recommendations. Those data show that, with a demand for greater specificity, variability in both diagnosis and treatment planning is greater as well.

Me, Inconsistent? No Way.

For dental clinicians, fallibility is a hard pill to swallow. We’re highly competent individuals, rigorously trained and inherently self-respecting. We could not, in good conscience, take on the risks that accompany most procedures were we not. But those characteristics may explain why we bristle when diagnostic inconsistency is flagged as a widespread problem with concerning implications for the quality of care in our field. “When I review an x-ray, I use my training, not my imagination,” we tell ourselves.

As a dentist, I’m sympathetic to that response, but as someone developing AI diagnostic systems, I’m troubled by it. Eliminating inconsistency is one of AI-assisted diagnostics’ most obvious benefits, but whenever that benefit is plugged publicly––or really when inconsistency concerns of any kind are raised––a vocal contingent invariably cries foul. They argue that inconsistency is neither so widespread nor so harmful as suggested, and that it is due to inexperience and neglect among a small community cohort.

If we were talking about intentional overdiagnosis, overtreatment, and other forms of fraud that have contributed to the unflattering portraits of our profession published over the years, most recently in The Atlantic and USA Today, we could buy the “few rotten apples spoiling the barrel” argument. But, in fact, we’re talking almost entirely about differences of opinion and unintentional mistakes. These can, indeed, be due to inexperience and neglect, but they arise far more often from our individuality and fallibility.

Differences separate all of us, no matter our level of experience, and, though some are loath to admit it, to err is human. Variations in judgment and human errors are routine. Are they harmful? In any single case, usually not, at least not seriously. But across an entire population, their impact is dramatically amplified.

Let’s Just Accept It, So We Can Move On

To deny the ubiquity and harm of our inconsistency is a disservice to our profession, our patients, and the future of oral health care. Though the stark results of the DAIC diagnosis and treatment mentioned earlier put a fine point on it, no study is required to draw a direct line from dental diagnosis to treatment. A series of subsequent lines then quickly take you from treatment to patient outcomes and, finally, to overall public health. That’s why widespread inconsistency is a problem. But that’s not the only reason.

Inconsistencies are a cause of friction between dentists and patients, and between dentists and insurers. They increase our vulnerability to malpractice litigation. And they do reputational damage to the profession.

When we can admit our inconsistency problem, we can begin to fix it. We slow down. We undertake our work with extra care. We are not vexed when our patients want a second opinion. We welcome it. We seek it, in fact. And we embrace novel methods to ensure we achieve a consistent standard of care.

The Inconsistency Terminator

Indeed, the advent of AI tools for dentistry just may give us that consistent standard. After all, AI has several big advantages over humans. Speed, for one. Total recall, for another. It never has bad days or loses sensitivity. With an AI assistant, a dentist can no longer be dismissed by a skeptical patient as just another expensive white coat with an opinion. Most people trust computers, and for good reason.

Not that AI replaces the practitioner, as many seem to fear. The ultimate interpretation and treatment decisions will remain ours. But from the patient’s point of view, AI adds scientific objectivity to our individual judgment.

Today, if we want to know what’s on Mars, we don’t rely on vague impressions; we send a probe. Soon, if we want to confirm our interpretation of the light and shadow in an x-ray, we’ll ask an AI to probe it.

But first, we’ll need to learn to admit that we need the help.